What is "technological neutrality" and should it be a goal of broadband deployment?

I’ve long been confused by the term “technological neutrality” in broadband deployment conversations. Advocates would say that if a provider can hit certain performance benchmarks, it doesn’t matter what technology is used. But all these technologies are not created equal. Using provider-reported performance benchmarks alone ignores valuable data on the access technology.

For example, there are 210,000 housing units where the best available technology is DSL yet they are still considered served by 100/20 broadband and thus ineligible for any funding under the IIJA. Even if the provider-claimed “maximum advertised speed” of 100Mbps download and 20 Mbps upload is true, that is not an Internet connection I’d rely on in my home. It is painful to think that even after the investments the IIJA will make, we could still have rural Americans reliant on DSL to reach the Internet.

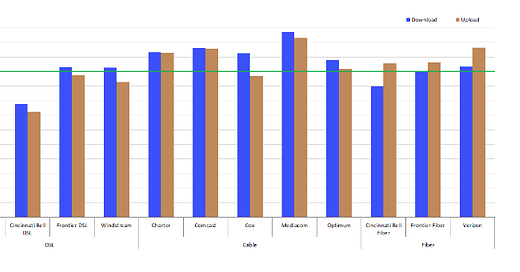

Since we’re reliant on “maximum advertised speed”, that term deserves a moment of analysis. The FCC publishes a Measuring Broadband America report which gathers data from white box devices in consumers’ homes across the country. While I would like to see bigger sample sizes, in general it is a thorough and important report. It finds that only DSL networks overestimate the bandwidth their networks can provide.

Looking at it from the other side, cable networks provide good home broadband. In Census blocks covering 97% of the housing units where cable is the best available option, those housing units are “served” by broadband, meaning speeds better than 100/20. Generally, plans are available up to ~900/35. DOCSIS 4.0 and its promise of symmetrical bandwidth, fiber that runs deep through the middle mile, and investments in better queue management to reduce latency means some of these copper cable networks have a lot of life left. Do we really want federal funds going to the 3% of these networks that are “underserving” their subscribers with worse than 100/20 Internet?

Fixed wireless is a tricky category that increasingly needs special attention. In 63% of the housing units where fixed wireless is the best available technology (and I rank it better than DSL), the service leaves the home “underserved” — it can’t reach 100/20 bandwidth. As many know, the technology is advancing, more spectrum is becoming available (soon in the 6 Ghz band!), and large players like Verizon and T-Mobile are starting to offer the service for home Internet. But it will always have problems over long distances, particularly without line of sight and through dense tree cover.

A quick note on methodology: this is all based on the December 2020 Form 477 data from the FCC. While that data has well known flaws, I think the problems can be overhyped, and the data is useful for comparative analysis like this. The housing unit data comes from Census block projections done by the FCC for 2019, which has its own problems putting population into road medians but is useful at high aggregation levels like this. Using those datasets, I calculate the best available broadband offering (excluding satellite) by throughput, putting each Census block and their housing units into a “served”, “underseved” or “unserved” bucket. Then I do a similar thing by technology, ranking the offerings from best to worst: fiber, cable, fixed wireless, DSL, no service at all.

There wasn’t the political will to do it, but I think we missed an opportunity with the IIJA to say: any American household that only has access to DSL Internet (or nothing at all) is eligible for grant assistance. To me, that is “neutrality”. And fairness.